Stories

Read Stories to learn more about key Nodeum use cases, Industries, Values and Customers.

Read Stories to learn more about key Nodeum use cases, Industries, Values and Customers.

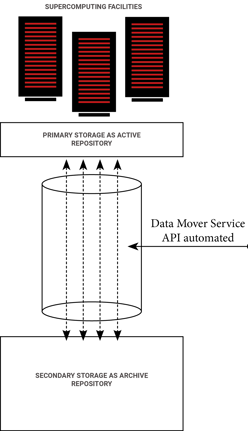

Supercomputing systems are so performant and scalable that the data generation speed has never been so fast. Research centers have to store the generated contents in "Data repositories" both are located close to each others and they are well integrated.

Two different categories of data repositories are used as storage tiers :

Active data repositories which provide the performance when data are written by supercomputing systems.

Archival data repositories which the capacity required to store all of these data.

The supercomputing infrastructure are base on scalable technologies. It is why data repositories capacity are exploding in term of contents and usage.

These facilities need to use an automatic data mover engine which will manage these two different type of data repositories. And provides the following features to users :

Manage the active storage

Organize the movement of the data from the active storage to the archive storage

Keep a direct access by the users.

Furthermore,

The solution have to manage every type of storage (NAS, Object Storage and Tape)

Provide a public API and SDK to facilitate the integration with the specific research application and reduce the IT infrastructure latency to access their files/data.

Data accessibility for files stored in active and in archive storage

Automated data mover

Included data protection mechanism

End-user Self-Service portal

Scalable central catalog of data and metadata

Automation with API

Workflow management system manage with API or by Apps

Workflow to automate the sharing of files

This platform provides the following features and capabilities :

Data movement between any data repositories.

Provide access for any users to the archived data.

Automate the data protection of each data repositories

Manage the storage of all files across all systems and bring transparent data movement workflows for any users.

Allow data protection of any type of storage systems and keep a direct control of the protected files.

It is the solution when your data volume and capacity is exploding, it unifies the capacity management. The indexed central catalog provides any needed information of the files managed by the ecosystem :

Status : protected, archive, online, ...

Attribute : name, creation data, modification date, size, ....

Lifecycle in regards to its data mobility, and then the localization.

Custom Metadata

The API offers integrations of the data management into your research process.

And with the logs and report for each jobs, control the good process of each batch to secure your data.

The headline and subheader tells us what you're offering, and the form header closes the deal. Over here you can explain why your offer is so great it's worth filling out a form for.

Remember: